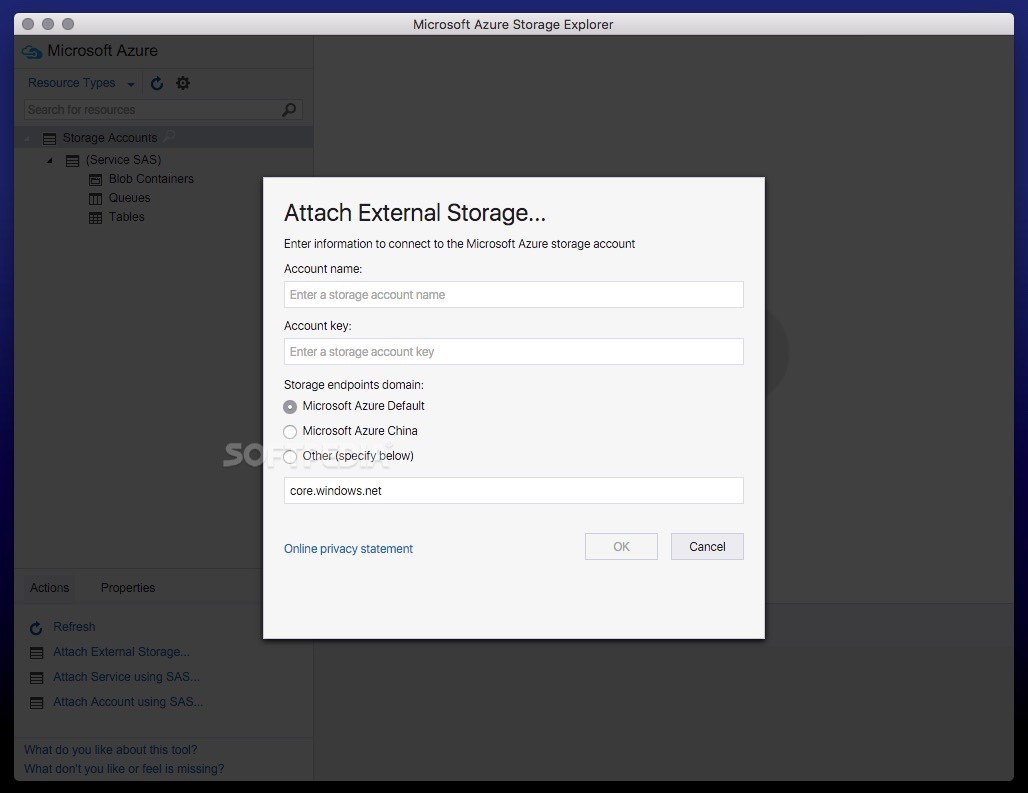

CloudBlobClient blobClient = account.CreateCloudBlobClient() ĬloudBlobContainer container = blobClient.GetContainerReference("images") ĬloudBlockBlob blockBlob = container. This seems like it should be pretty simple. I tried to pass "images/members" to GetBlockBlobReference but then the file just didn't save at all (or, at least I can't find it). I would like the files to save to that subdirectory. Within that container is a folder, "members". And, for the life of me, I can not get it to work.Ĭurrently, the image is saving to our "images" container. Unfortunately, I would like the file to save to a subdirectory in that container. Thanks to the helpful response provided via the link below the code snippet, I was able to successfully save the file to our top-level container. JsonLocation $(Pipeline.I'm trying to save an image to our Azure blog storage. On the newly uploaded file, right-click and choose Get Shared Access Signature. Do click on 'Mark as Answer' on the post that helps you, this can be beneficial to other community members. Using the Azure Storage Explorer, creating these links is very easy. This makes it easier to configure paths within your YAML code. You may create a new folder in a container using Microsoft Azure Storage Explorer, there is a 'New Folder' button that allows you to create a folder in a container and also you could copy the blobs/files between the folders. You may also want to add a (temporary) treeview step to check the paths on your agent. Make sure you have a checkout step to copy the config and powershell file from the repository to the agent. If you integrate this in your existing Data Factory or Synapse YAML pipeline then you only need to add one PowerShell step. Azure Storage Explorer vCloudBitsBytes 6.93K subscribers Subscribe 15K views 1 year ago Learn Azure In this video, I’ll show you how you can install Azure Storage Explorer on a Windows. Write-output "File $($path) not found, containers not setup." Write-Output "Storageaccount: $($StorageAccountName) not available, containers not setup." Write-Output "Path $($folder) created in container $($container)" New-AzDataLakeGen2Item -Context $context -FileSystem $container -Path $path -Directory | Out-Null Write-Output "Path $($folder) exists in container $($container)" $FolderCheck = Get-AzDataLakeGen2Item -FileSystem $container -Context $context -Path $path -ErrorAction Silentl圜ontinue # 3) Check if path ends with a forward slash

# 1) Replace backslashes by a forward slash Write-Output "Found $($folders.Count) folders in config for container $($container)" Write-Output "Retrieving folders from config" New-AzStorageContainer -Name $container -Context $context | Out-Null Write-Output "Creating container $($container)" Write-Output "Container $($container) already exists" $ContainerCheck = Get-AzStorageContainer -Context $context -Name $container -ErrorAction Silentl圜ontinue

Write-output "Checking existence of container $($container)" # First a little cleanup of the container # Loop through container array and create containers if the don't exist Write-output "Storage account $($StorageAccountName) found" Serve a function or access a Cloud Run container from a Hosting URL. $StorageCheck = Get-AzStorageAccount -ResourceGroupName $ResourceGroupName -Name $StorageAccountName -ErrorAction Silentl圜ontinue # Check Storage Account existance and get the context of it

$Containers = $($_.containers) | Get-Member -MemberType NoteProperty | Select-Object -ExpandProperty Name $Config = Get-Content -Raw -Path $path | ConvertFrom-Json # Get all container objects from JSON file

offers cloud storage, file synchronization, personal cloud, and client software. # Check existance of file path on the agent Open the Dropbox folder in File Explorer (Windows) or Finder (Mac). Write-output "Extracting containernames from $($path)" $path = Join-Path -Path $JsonLocation -ChildPath "config_storage_account.json" # you need different files/configurations per environment. In order to create permissions for all subfolders and files you will have to setup DFS on one of your Azure servers and use NTFS permissions from there to go deeper than the container level. Create an extra parameters for the filename if # Combine path and file name for JSON file. # It does not delete of update containers and folders or set authorizations This is of course possible, but make sure to test this very thoroughly and even with testing a human error in configuring the config file is easy to make and could cause lots of data lose! # This PowerShell will create the containers provided in the JSON file Note that the script will not delete containers and folders (or set authorizations to them).

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed